- sales/support

Google Chat:---

- sales

+86-0755-88291180

- sales01

sales@spotpear.com

- sales02

dragon_manager@163.com

- support

tech-support@spotpear.com

- CEO-Complaints

zhoujie@spotpear.com

- Only Tech-Support

WhatsApp:13246739196

- Purchase/Shipping/Refund

WhatsApp:13424403025

- HOME

- >

- ARTICLES

- >

- Jetson Series

- >

- Jetson Kits

Jetson Nano JetBot AI Kit User Guide

Introduction

This is an AI Robot kit based on Jetson Nano Developer Kit. Supports facial recognition, object tracking, auto line following or collision avoidance and so on.

User Guides

- 1. Install Image

【Note】 The software part of this guide mostly based on NVIDIA jetbot wiki , you can also directly refer to it

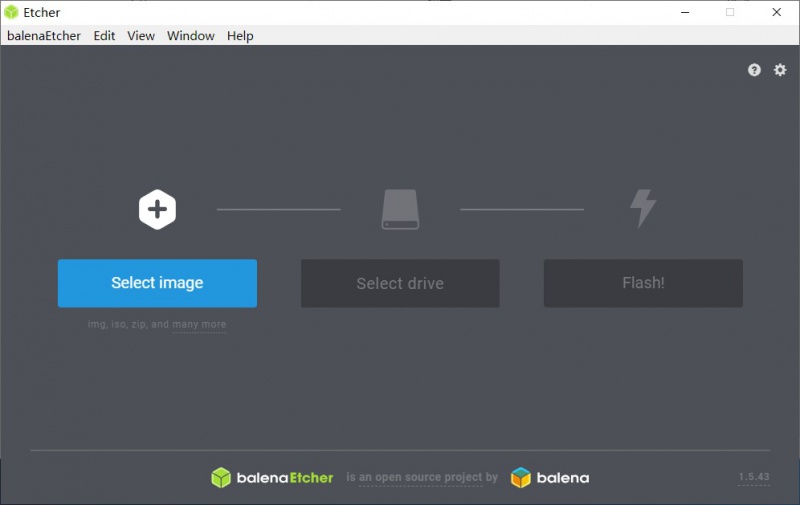

- Step 1. Write JetBot image to SD card

- You need to prepare an SD card which should be at least 64G

- Download JetBot image which is provided by NVIDIA and unzip it. Click here to download it

- Connect the SD card to PC via card reader

- User Etcher software to write image (unzip above) to SD card. Click here to download Etcher software

- After writing, eject the SD card

- Step 2. Startup Jetson Nano Developer Kit

- Insert SD card to SD card slot of Jetson nano (slot is under Jetson Nano board)

- Connect HDMI display (if you don't have HDMI or DP display and want to buy one, recommend our HDMI display), keyboard and Mouse to Jetson Nano Developer Kit

【Note】You had better test the Jetson Nano Developer Kit before you assemble JetBot

- Step 3. Connect Jetbot to WIFI

All the examples use WIFI, we need to connect JetBot to WIFI firstly.

- Start Jetson nano Developer Kit, default user name and password of Jetbot are both jetbot

- Click Network icon on top-right of Desktop and connect WIFI

- Power off. Then assemble Jetbot

- Start Jetson nano again. After booting, Ubuntu will auto-connect WIFI, IP address is also displayed on OLED

- Step 4. Access JetBot via Web

- After networking. You can remove peripherals and power adapter.

- Turn Power switch of Jetbot into On

- After booting, IP address of OLED can be displayed on OLED

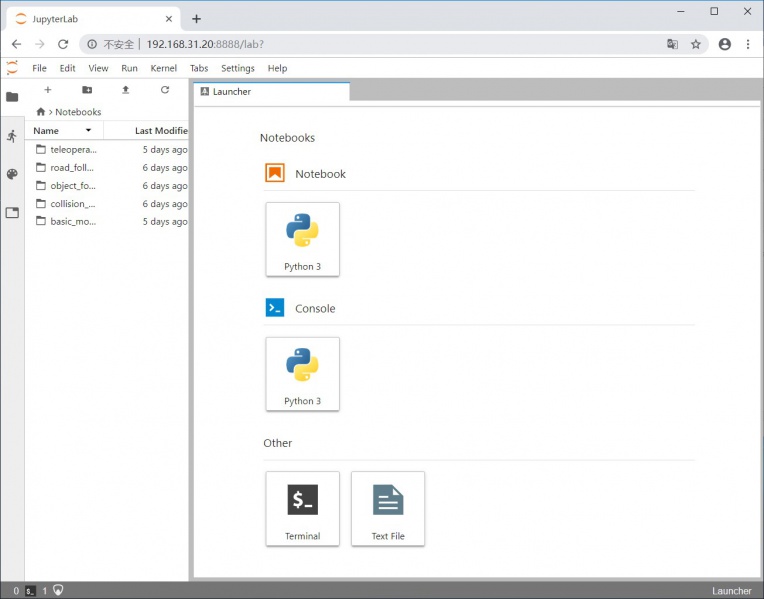

- Navigate to http://<jetbot_ip_address>:8888 from your desktop's web browser

- Step 5. Install the latest software

The JetBot GitHub repository may contain software that is newer than that pre-installed on the SD card image. To install the latest software:

- Access Jetbot by going to http://<jetbot_ip_address>:8888

- Launch a new terminal. Default user name and password are both jetbot

- Get and install the latest JetBot repository from GitHub.The repository provided here is modified by Waveshare, which supports displaying the current-voltage of batteries. If you want to install original codes, please following NVIDIA jetbot GitHub.

git clone https://github.com/waveshare/jetbot cd jetbot sudo python3 setup.py install cd sudo apt-get install rsync rsync -r jetbot/notebooks ~/Notebooks

- Step 6. Configure power mode

To ensure that the Jetson Nano doesn't draw more current than the battery pack can supply, place the Jetson Nano in 5W mode by calling the following command

- You need to launch a new Terminal and enter following commands to select 5W power mode

sudo nvpmodel -m1

- Check if mode is correct

sudo nvpmodel -q

【Note】m1: 5W power mode, m2: 10W power model

- 2. Basic motion

- Access jetbot by going to http://<jetbot_ip_address>:8888, navigate to ~/Notebooks/basic_motion/

- Open basic_motion.ipynb file and following the notebook

【Note】You can click icon ▶ to run codes, or select Run -> Run Select Cells. Make sure the JetBot has enough space to run.

- 3. Teleoperations

- Access JetBot by going to https://<jetbot_ip_address>:8888, navigate to ~/Notebooks/teleoperation/

- Open teleoperation.ipynb file and following notebook

- Connect USB adapter to PC

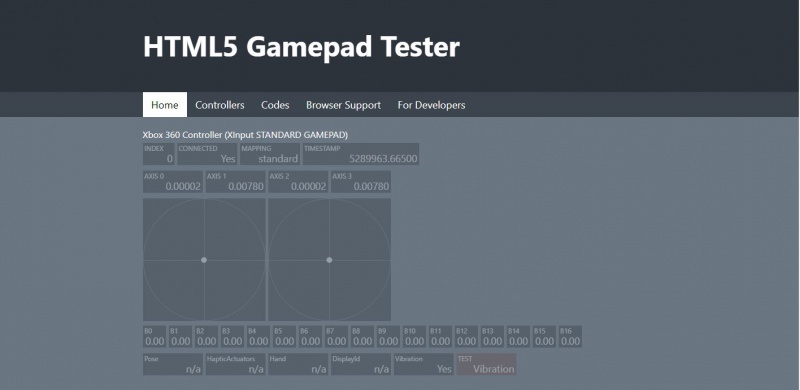

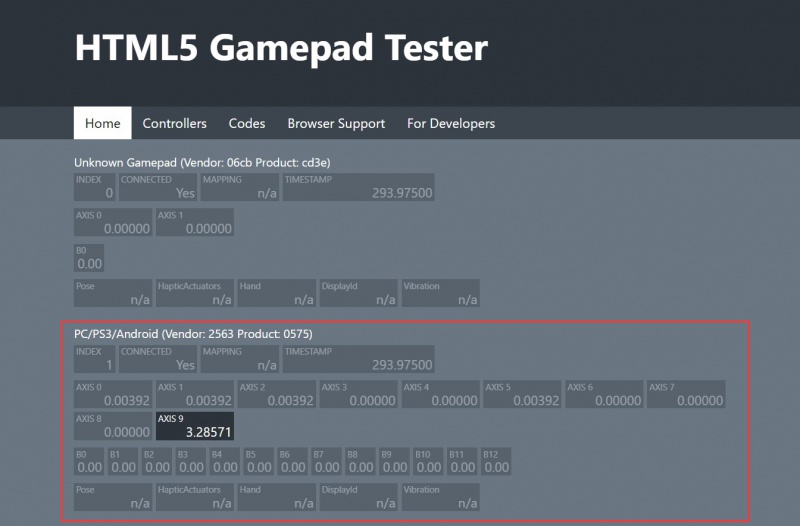

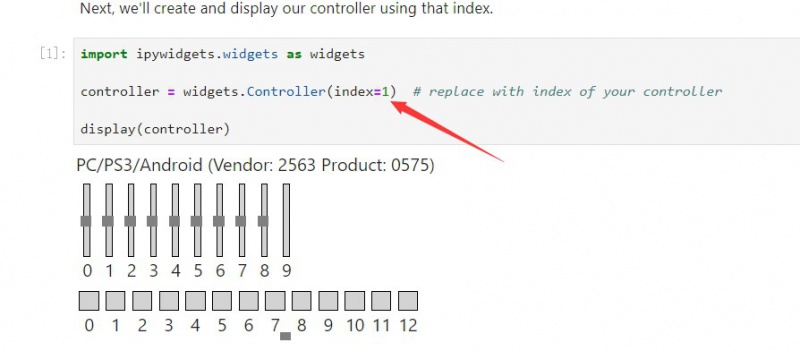

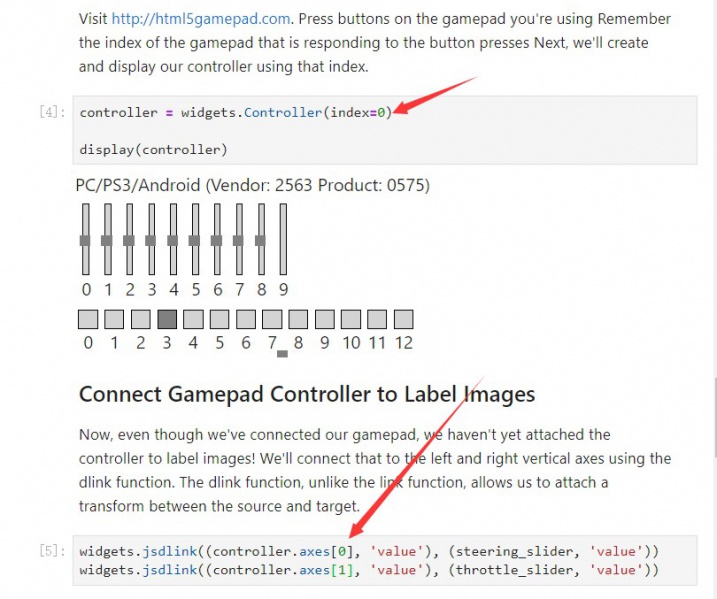

- Go to https://html5gamepad.com, check the INDEX of Gamepad

- Before you run the example, let's learn how the gamepad work.

- The gamepad included supports two working modes. One is PC/PS3/Andorid mode and another is Xbox 360 mode.

- The gamepad is set to PC/PS3/Andorid mode by default, in this mode, the gamepad has two sub-modes. You can press the HOME button to switch it. In Mode 1, the front panel lights on only one LED, the right joystick is mapped to buttons 0,1,2 and 3, and you can get only 0 or -1/1 value from the joysticks. In Mode 2, the front panel lights on two LEDs, the right joystick is mapped to axes[2] and axes[5]. In this mode, you can get No intermediate values from joysticks.

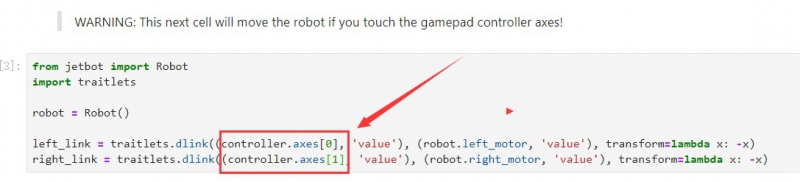

- To switch between PC/PS3/Andorid mode and the Xbox 360 mode, you can long-press the HOME button for about 7s. In Xbox mode, the left joystick is mapped to axes[0] and axes[1], right joystick is mapped to axes[2] and axes[3]. This mode is exactly what the NVIDIA examples use. We recommend you to set your gamepad to this mode when you use it. Otherwise, you need to modify the codes.

- Modify the index. Run and test Gamepad

- Modify axes values if required, here we use axes[0] and axes[1]

- For more details, please refer to notebook

- 4. Collision_avoidance

In this example, we'll collect an image classification dataset that will be used to help keep JetBot safe! We'll teach JetBot to detect two scenarios free and blocked. We'll use this AI classifier to prevent JetBot from entering dangerous territory.

- Step 1. Collect data on JetBot

- Access JetBot by going to https://<jetbot_ip_address>:8888, navigate to ~/Notebooks/collision_avoidance/

- Open data_collection.ipynb file and following notebook

- This model was trained on a limited dataset using the IMX219-160 Camera with wide-angle attachment.

- You need to put the JetBot to different spaces for collecting data as more as possible

- Step 2. Train neural network

- Access JetBot by going to https://<jetbot_ip_address>:8888, navigate to ~/Notebooks/collision_avoidance/

- Open and follow the tain_model.ipynb notebook

- Step 3. Run live demo on JetBot

- Access JetBot by going to https://<jetbot_ip_address>:8888, navigate to ~/Notebooks/collision_avoidance/

- Open and following the live_demo.ipynb notebook

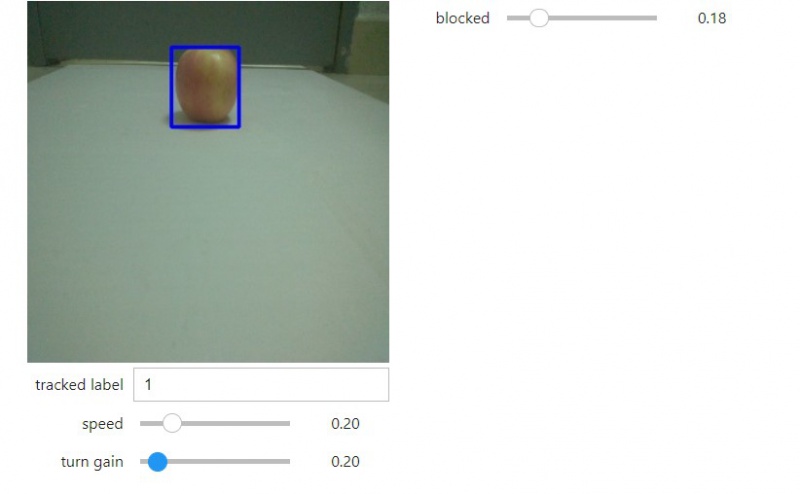

- 5. Object following

Here we use coco dataset

- Access JetBot by going to https://<jetbot_ip_address>:8888, navigate to ~/Notebooks/object_following/

- Before running example, you should upload the pre-trained ssd_mobilenet_v2_coco.engine model to current directory, and the model used at the last chapter is required as well.

- Open and follow the live_demo.ipynb notebook

- 6. Line tracking

This chapter we will use data collect, link tracking and auto-detecting to realize Robot auto line-tracking

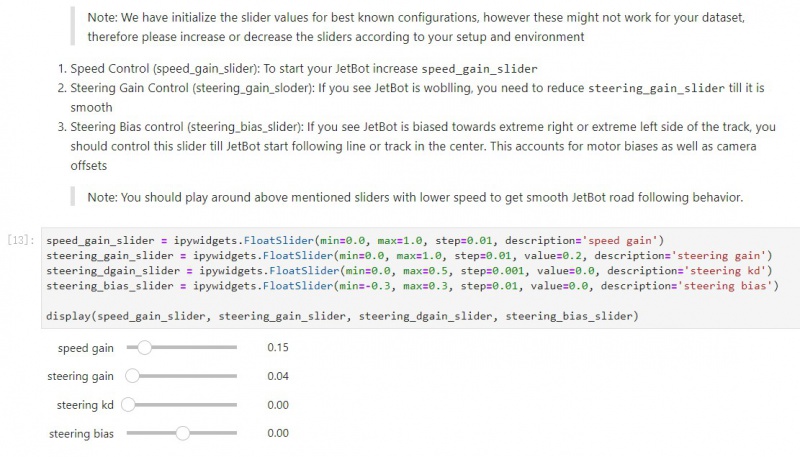

- Step 1. Collect data by JetBot

- Access JetBot by going to https://<jetbot_ip_address>:8888, navigate to ~/Notebooks/road_following/

- Open data-collection.ipynb file

- Running the codes and a video is played, you can follow it

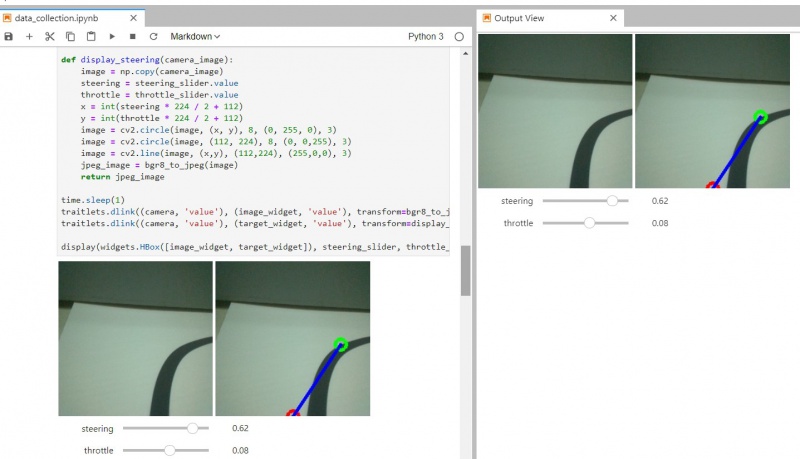

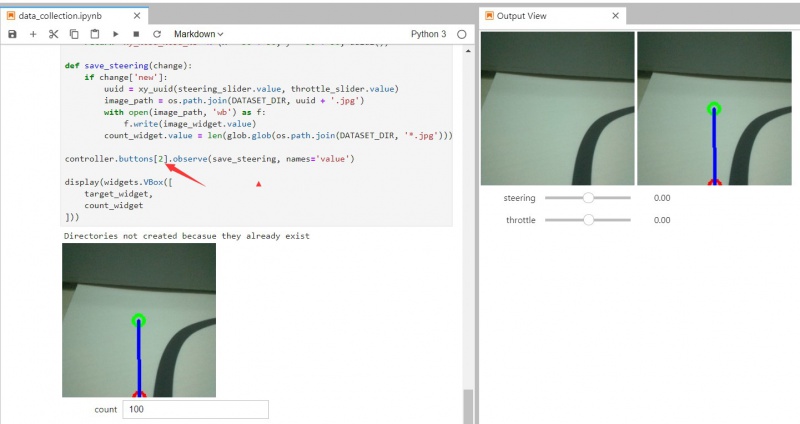

- On the image captured by camera, there are a green point and a blue line. The point and line is the expected road which Robot run

- The content below is similar to [#3. Teleoperation], modify the index and axes values

【Note】The axes buttons used here should be analog buttons, which support

- Modify button value for capturing. (you can also keep default setting)

- Collecting data. Set JetBot to different place of the lines, use Gamepad to move green point to the black line. Blueline is the way Jetbot expected to run in. You can press the capture button to capture a picture. You should collect pictures as soon as possible, count shows the amount of the pictures captured.

【Note】If Gamepad is inconvenient for you, you can set the position of green point by dragging steering and throttle sliders.

- Save pictures

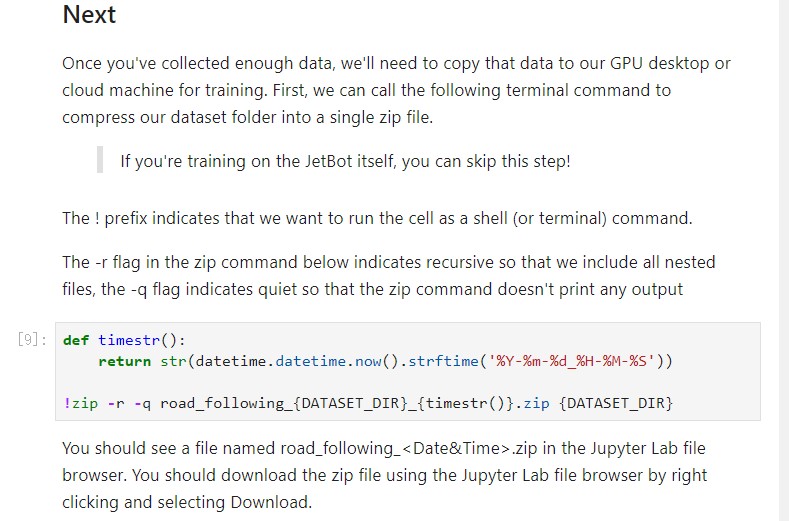

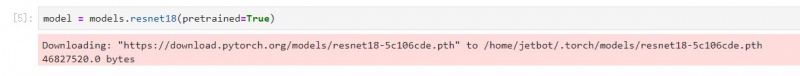

- Step 2. Training model

- Access Jetbo by going to https://<jetbot_ip_address>:8888, navigate to ~/Notebooks/road_following/

- Open train_model.ipynb file

- If you use the data collected above, you needn't to unzip files next

- If you use external data, you need to modify the name road_following.zip to corresponding file name and run the cell

- Download Model

- Train model, it will generate best_steerin_mdel_xy.pth file

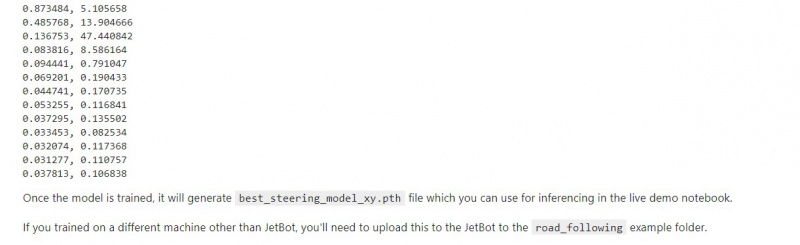

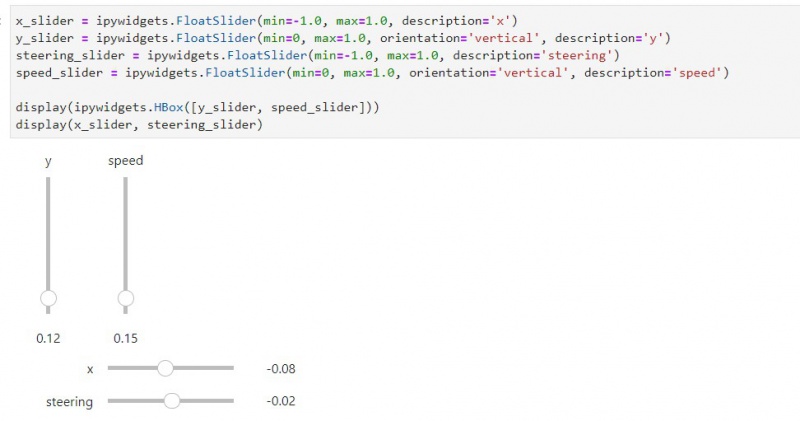

- Step 3. Road following

- Access Jetbo by going to https://<jetbot_ip_address>:8888, navigate to ~/Notebooks/road_following/

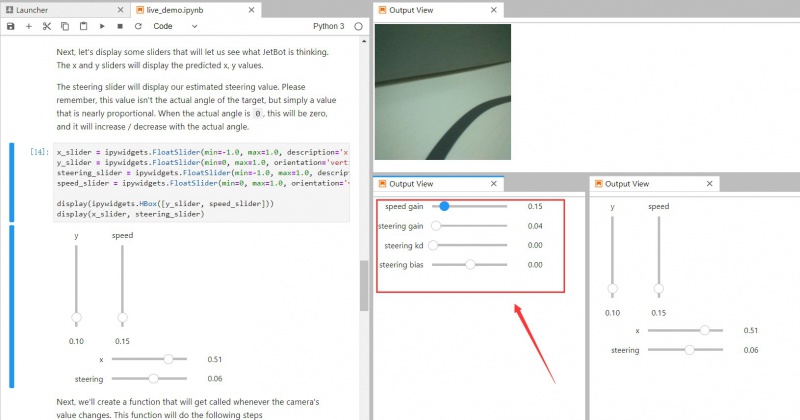

- Open live_demo.ipynb file

- Load model and open camera for living video.

- You can drag the sliders to modify parameters

- x, y are forecast values. Speed is VSL of jetbot, steering is steering speed of jetbot

- Move Jetbot by change the speed gain

- 【Note】 You cannot set the speed gain too high, otherwise, JetBot may run fast and go off the rail. You can also set the steering smaller to make the motion of jetbot much more smooth.