- sales/support

Google Chat:---

- sales

+86-0755-88291180

- sales01

sales@spotpear.com

- sales02

dragon_manager@163.com

- support

tech-support@spotpear.com

- CEO-Complaints

zhoujie@spotpear.com

- Only Tech-Support

WhatsApp:13246739196

- Purchase/Shipping/Refund

WhatsApp:13424403025

- HOME

- >

- PRODUCTS

- >

- Jetson Series

- >

- Jetson Kits

- >

- Jetson Nano

- /

- Jetson-Robot

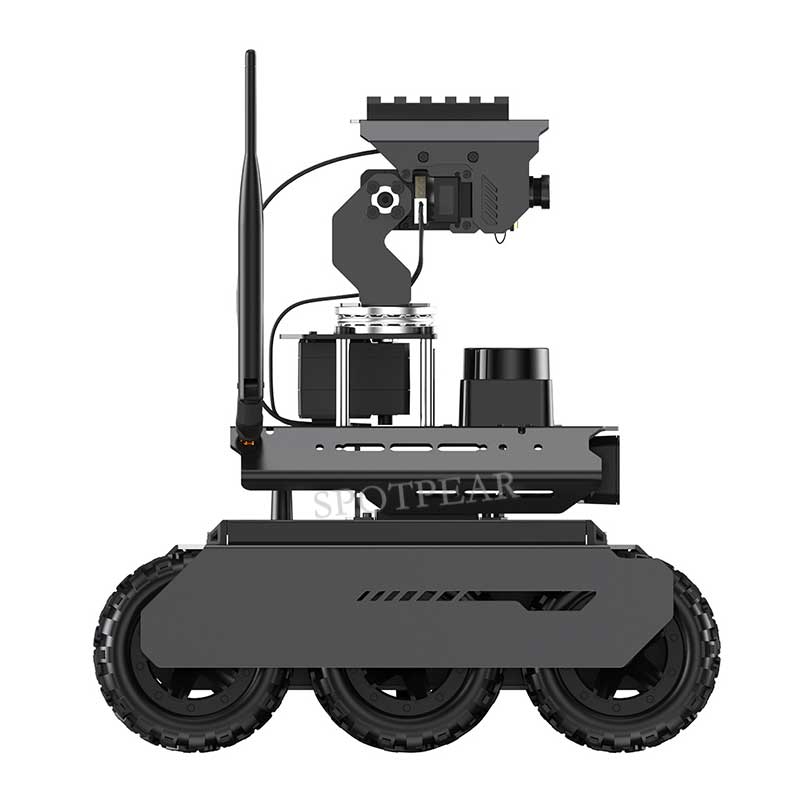

UGV Rover ROS2 PT AI OpenCV Robot Car MediaPipe For Jetson Orin Nano

$899

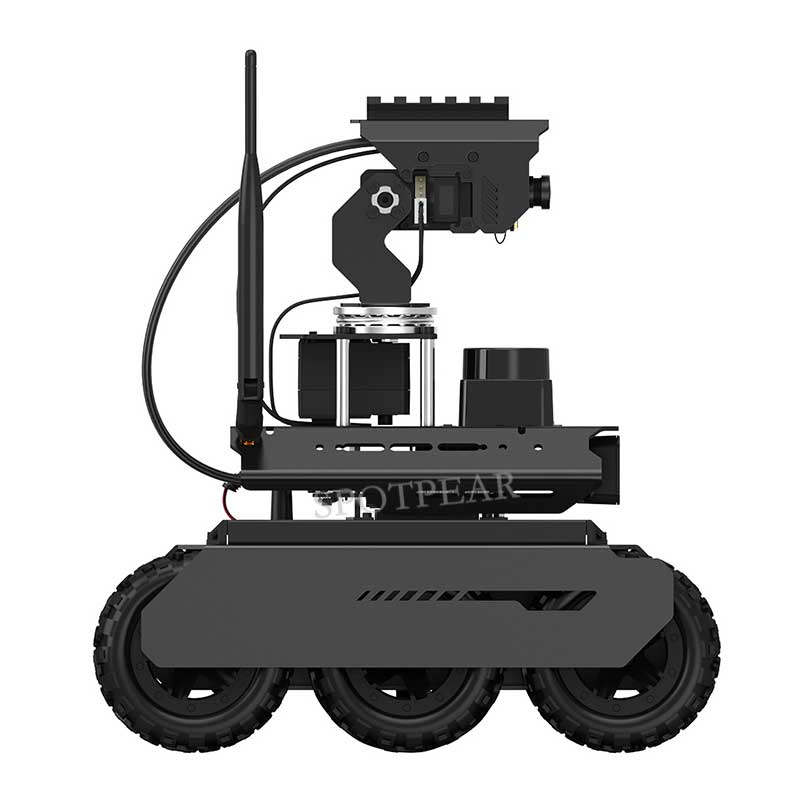

UGV Rover

Oper-Source 6 Wheels 4WD Al Robot, Based On ROS2

Offers More Creative Potential And Possibilitjes

AI Robot Car Recommend

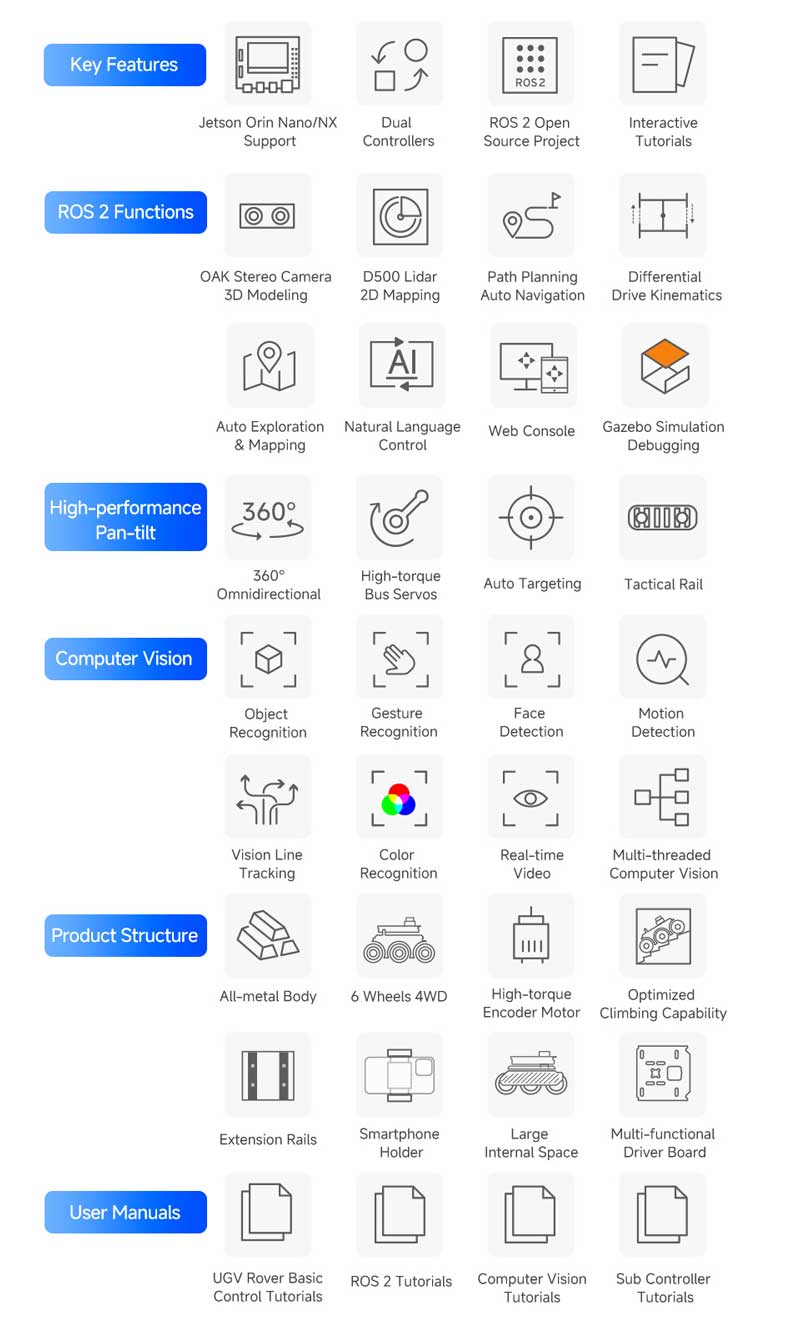

The UGV Rover ROS2 Kit is an AI robot designed for exploration and creation with excellent expansion potential, based on ROS 2 and equipped with Lidar and depth camera, seamlessly connecting your imagination with reality. Suitable for tech enthusiasts, makers, or beginners in programming, it is your ideal choice for exploring the world of intelligent technology.

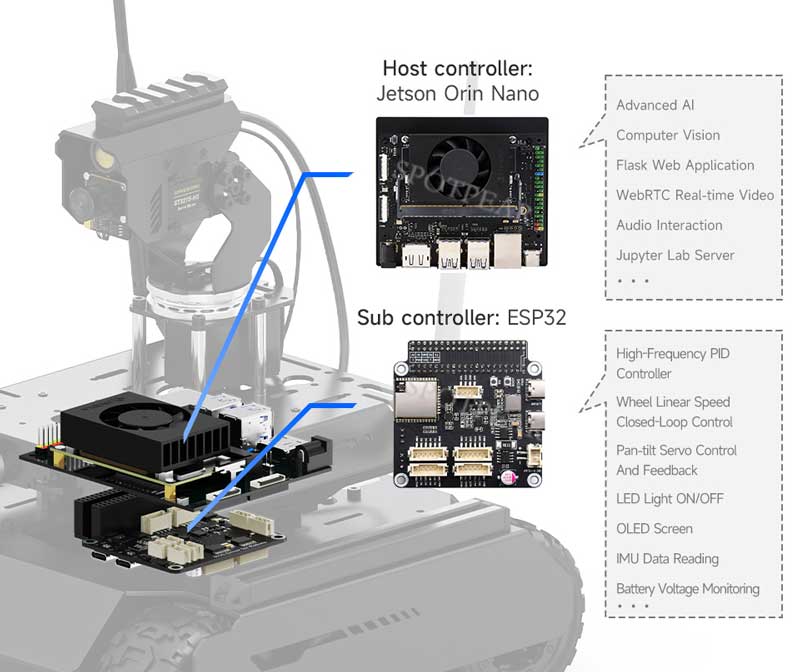

Equipped with the high-performance Jetson Orin series computer to meet the challenges of complex strategies and functions, and inspire your creativity. Adopts dual-controller design, combines the high-level AI functions of the host controller with the high-frequency basic operations of the sub controller, making every operation accurate and smooth.

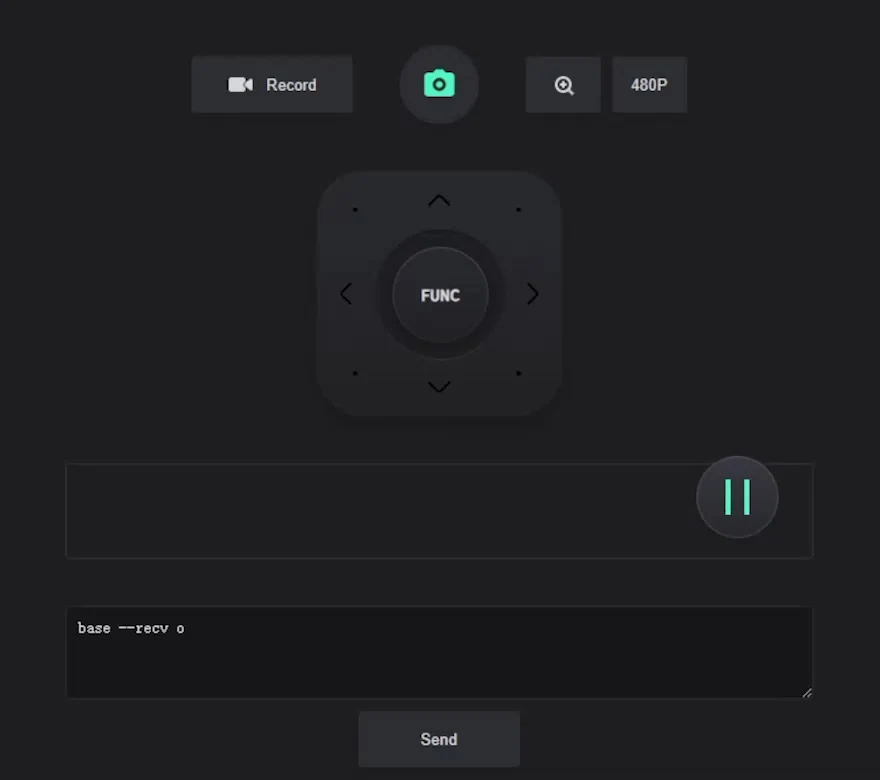

Easy to be controlled remotely via UGV Rover Web Application without downloading any software, just open your browser and start your journey. You can use the basic ROS 2 functions of the robot without installing a virtual machine on the PC. Supports high-frame rate real-time video transmission and multiple AI Computer Vision functions, the UGV Rover is an ideal platform to realize your ideas and creativity!

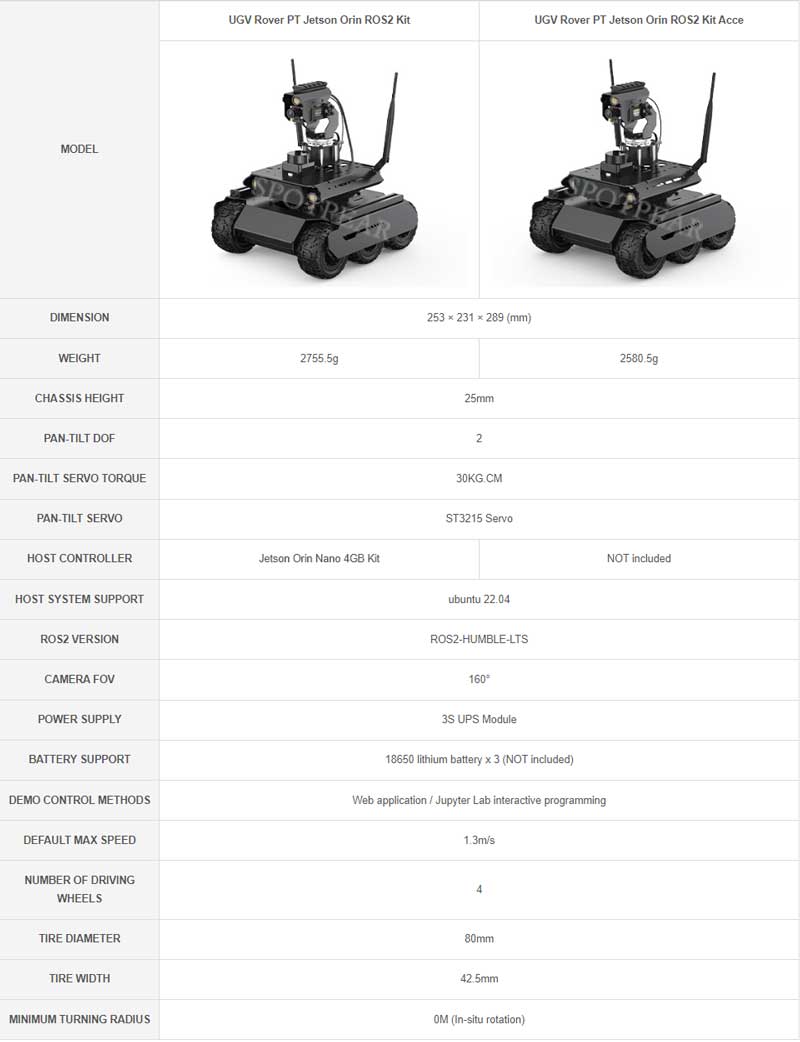

Optional for host controller (Jetson Orin Nano 4GB), or you can choose the Acce Version if you've got a Jetson Orin module (including its baseboard).

Optional For Jetson Orin Nano 4GB Kit, Provides Up To 20 TOPS AI Performance. Comes With A 128 GB NVMe Solid State Drive, High-Speed Reading/Writing, To Meet The Development Needs Of Large AI Projects

Dual-Controller Design, Provides Efficient Collaboration And Upgraded Performance

The Host Controller Adopts Jetson Orin Nano For AI Vision And Strategy Planning, And The Sub Controller Uses ESP32 For Motion Control And Sensor Data Processing.

This Design Can Improve The Processing Efficiency And Response Speed Of The Host Computer Without Bearing All Tasks. And The Sub Controller Focuses On Basic Functions To Ensure The Accurate And Smooth Actions Of Robot.

Ensures Advanced Decision-Making Performance Of Robot And System Compatibility At The Same Time. Supports All AI Functions Of The Previous AI Kit Series Products

Equipped With 5MP 160° Wide-Angle Camera For Capturing Every Detail

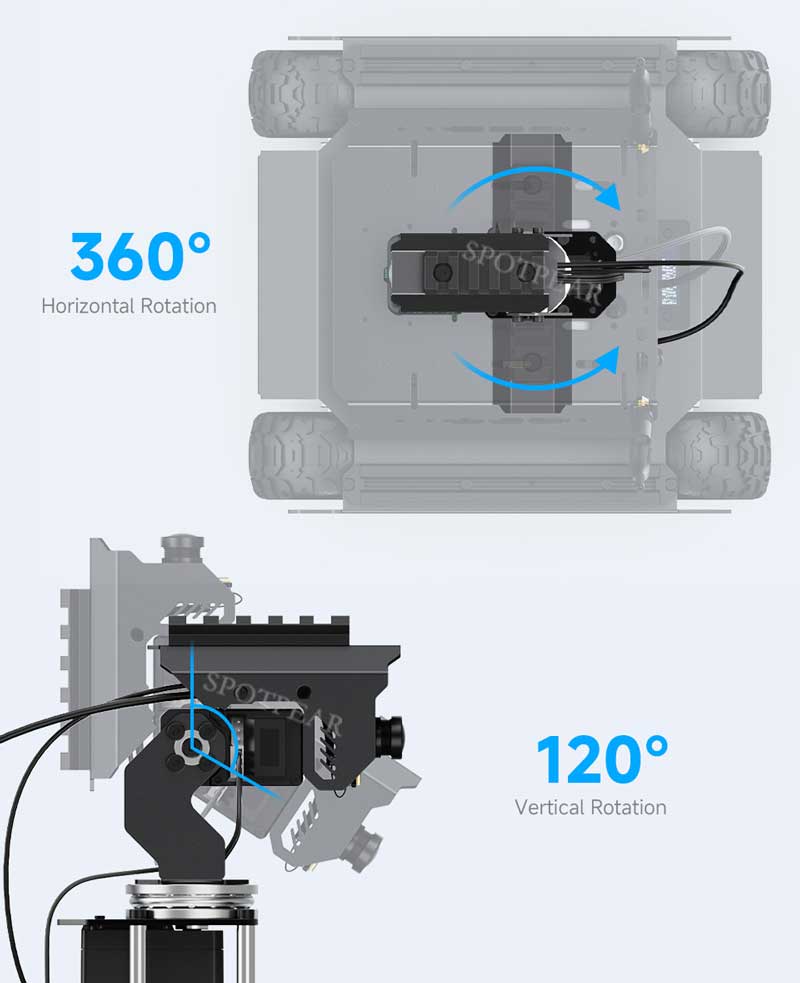

Supports Pan-Tilt drag-and-drop control via mouse or touch panel of the laptop, providing a better control experience as FPS games

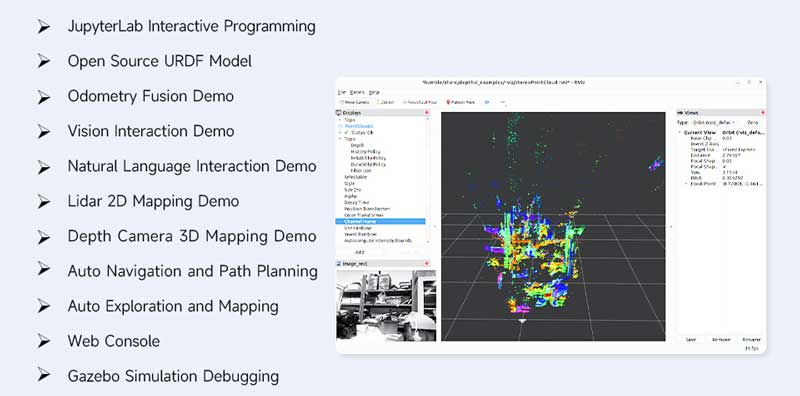

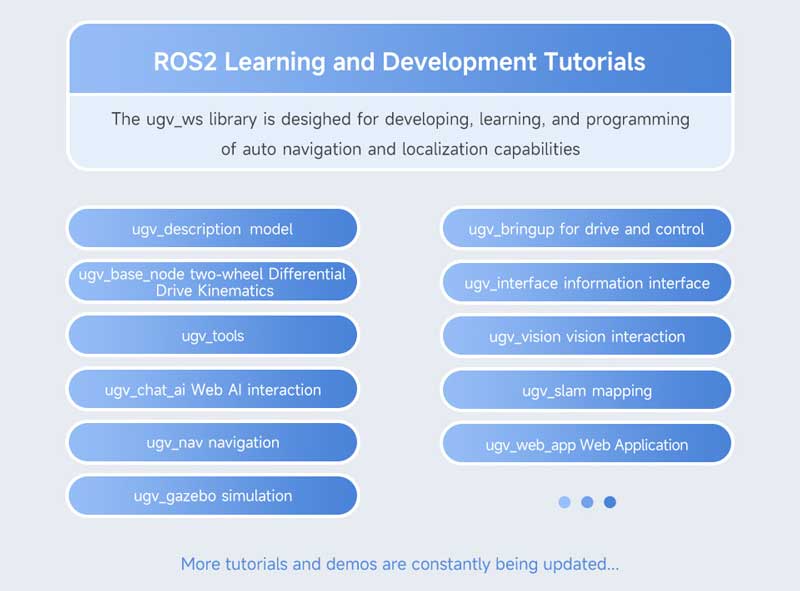

Open Source For All ROS 2 Development Resources

Open Source For All Demos Of Host Controller And Sub Controller, Including Robot Description File (URDF Model), Sensor Data Processing Node Of Sub Controller, Kinematic Control Algorithms, And Various Remote Control Nodes

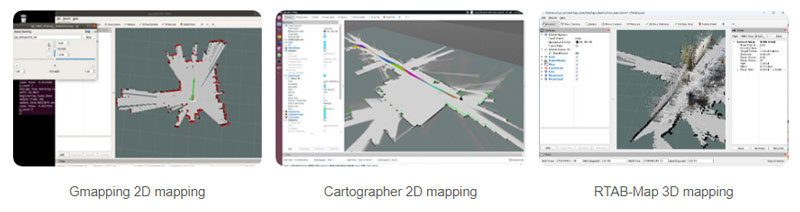

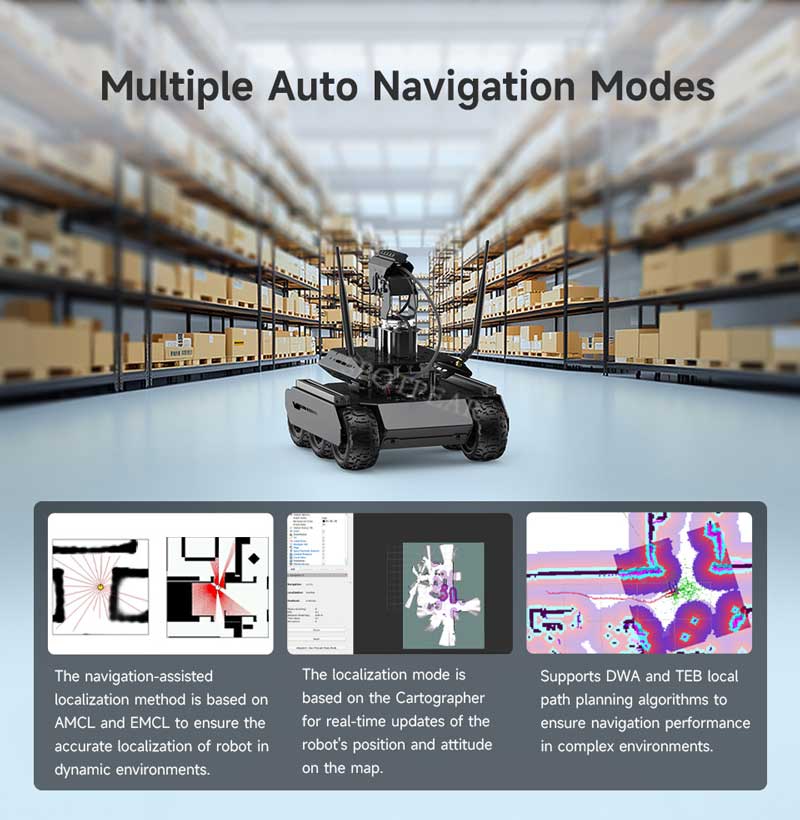

Integrates Various ROS 2 Mapping Methods

Meet The Needs Of Mapping In Different Scenarios

Multiple Cost-Effective Sensors

Adopts Multiple Sensors With High Cost-Effectiveness And Practicality

Auto Exploration And Mapping

Using SLAM Toolbox To Implement Mapping And Navigation Functions Simultaneously In Unknown Environments, Simplifying The Task Execution Process, Which Is Suitable For Unmanned Applications

Supports Natural Language Interaction

Adopts Large Language Model (LLM) Technology, Users Can Give Commands To The Robot By Natural Language, Enabling It To Perform Tasks Such As Moving, Mapping, And Navigation

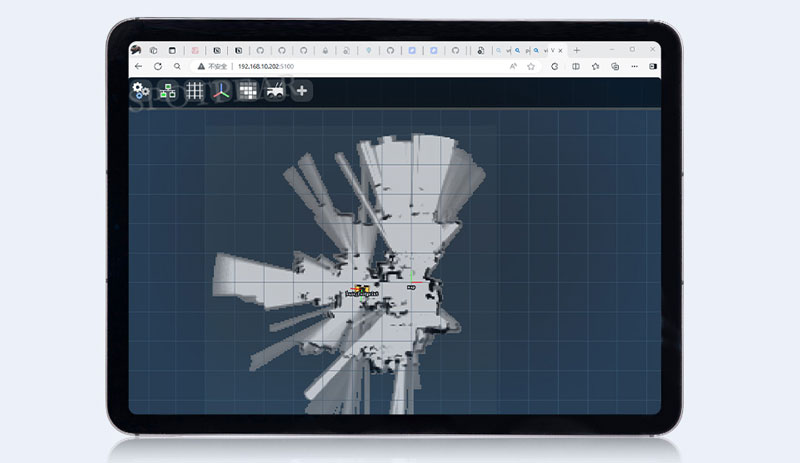

Provides Web Console Tool

You Can Use The Basic ROS 2 Functions On The Web Without Installing A Virtual Machine On The PC, Supports Cross-Platform Operation On Android Or IOS Tablets. Users Can Simply Open A Browser And Control The Robot For Moving, Mapping, Navigation, And Other Operations

ROS2 Node Command Interaction

Users Can Send Control Commands To The Robot By A Script For Performing Operations Such As Moving, Obtaining The Current Location, And Navigating To A Specific Point, Etc. Which Is More Convenient For Secondary Development

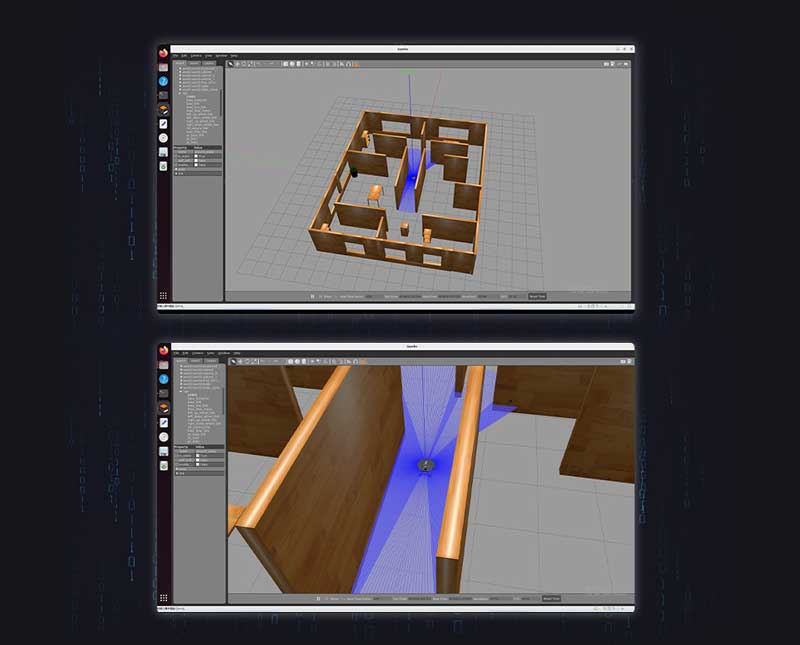

Gazebo Simulation Debugging

Provides Gazebo Robot Mode And Complete Functionality Library For Simulation Debugging, Helping You Verify And Test The System During The Early Stages Of Development

Continuing The Adventure As Night Falls

High-Brightness LED Light For Ensuring Clear Images In Low-Light Conditions

Suitable For Tactical Extension

Comes With 21mm Wide Rail And 30KG.CM High Precision & High-Torque Bus Servo For Tactical Extension

for reference only, the accessories in the picture above are NOT included

Standard Aluminum Rail

Comes With 2 × 1020 European Standard Profile Rails, And Supports Installing Additional Peripherals Via The Boat Nuts To Meet Different Needs, Easily Expanding The Special Operation Scenarios

Supports Driving In Complex Terrain

6 Wheels × 4WD Design, Using 6 Wheels Can Provide A More Stable Platform And Larger Contact Area, While 4WD Can Provide Stronger Power And Traction To Deal With Various Terrains And Obstacles

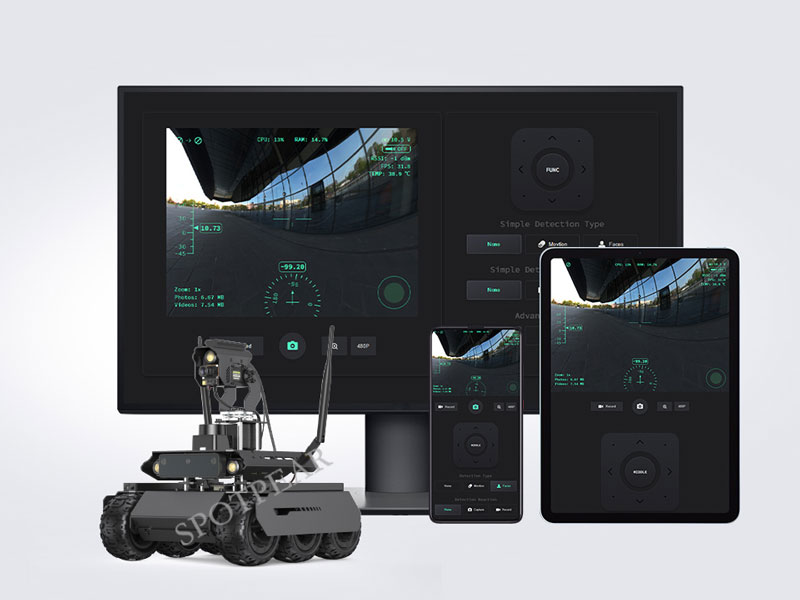

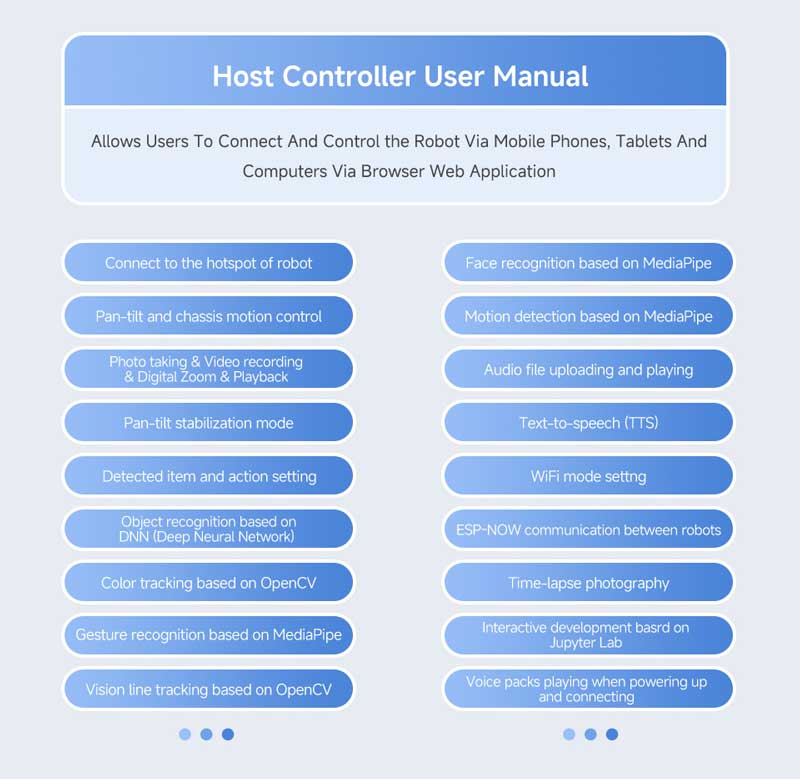

Easy To Control Via

Cross-Platform Web Application

No App Installation Required, Allows Users To Connect And Control The Robot Via Mobile Phones, Tablets And Computers Via Browser Web App. Supports Shortcut Key Control Such As WASD And The Mouse Via A PC With Keyboard

WebRTC Real-Time Video Transmission

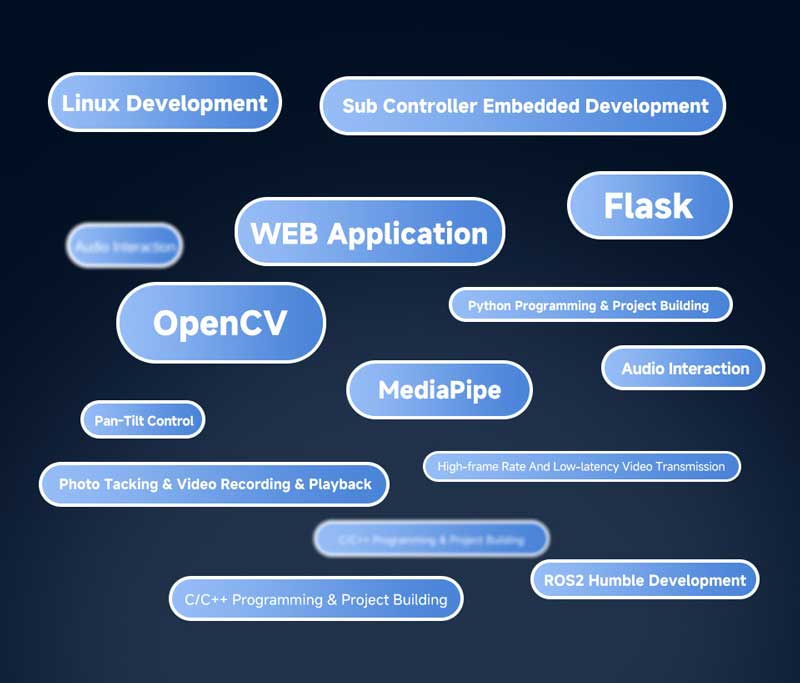

Adopts Flask Lightweight Web Application, Based On WebRTC Ultra-Low Latency Real-Time Transmission, Using Python Language And Easy To Extend, Working Seamlessly With OpenCV

Recognition, Tracking, And Targeting

Based On OpenCV To Achieve Color Recognition And Automatic Targeting. Supports One-Key Pan-Tilt Control And Automatic LED Lighting, Allows Expansion For More Functions

Face Detection:

Automatic Picture Or Video Capturing

Based On OpenCV To Achieve Face Recognition, Supports Automatic Photo Taking Or Video Recording Once A Face Is Recognized

Intelligent Object Recognition

Supports Recognizing For Many Common Objects With The Default Model

Gesture Recognition:

AI Interaction With Body Language

Combines OpenCV And MediaPipe To Realize Gesture Control Of Pan-Tilt And LED

Gesture control for photo taking

LED ON/OFF and blacklight control

More MediaPipe Demos For Easily Creating

Complex Video Processing Tasks

MediaPipe is an open-source framework developed by Google for building cross-platform multimedia processing pipelines, provides a set of pre-built components and tools, its high-performance processing capability enables the robot to respond to and process complex multimedia inputs such as real-time video analytics.

Face Recognition

Attitude Detection

Vision Line Tracking For Autonomous Driving

Integrated With Vision Line Tracking Function On The Computer Vision Demo, Comes With Yellow Tape For Easier Planning A Path For The Robot. Users Can Understand The Basic Algorithms Of Autonomous Driving With This Simple Demo

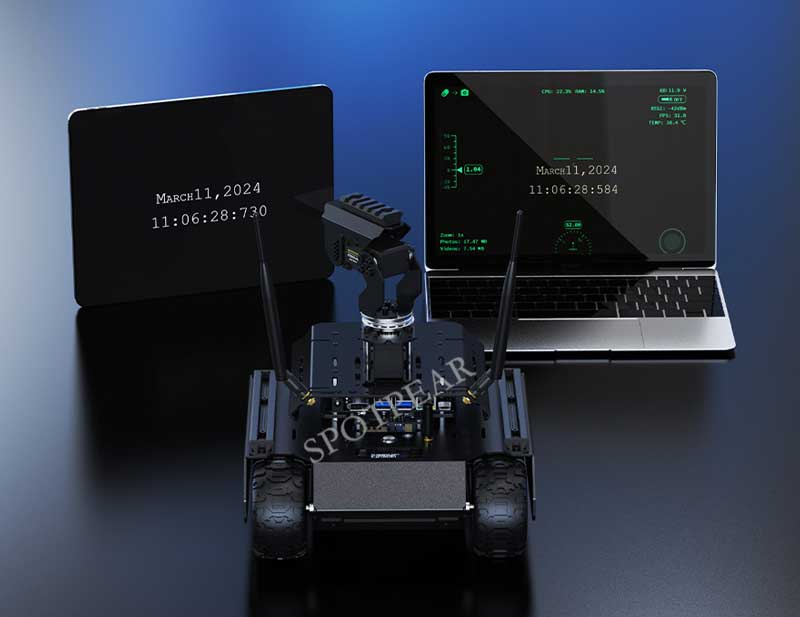

Obtains Real-Time Information Feedback

Real-Time Monitoring The Operating Status Of The Robot

Web Page Command Line Tool

Multiple Functions For Easier Expansion

Quick To Set Up, Easy To Expand

Easily Customize And Add New Functions Without Modifying Front-End Code

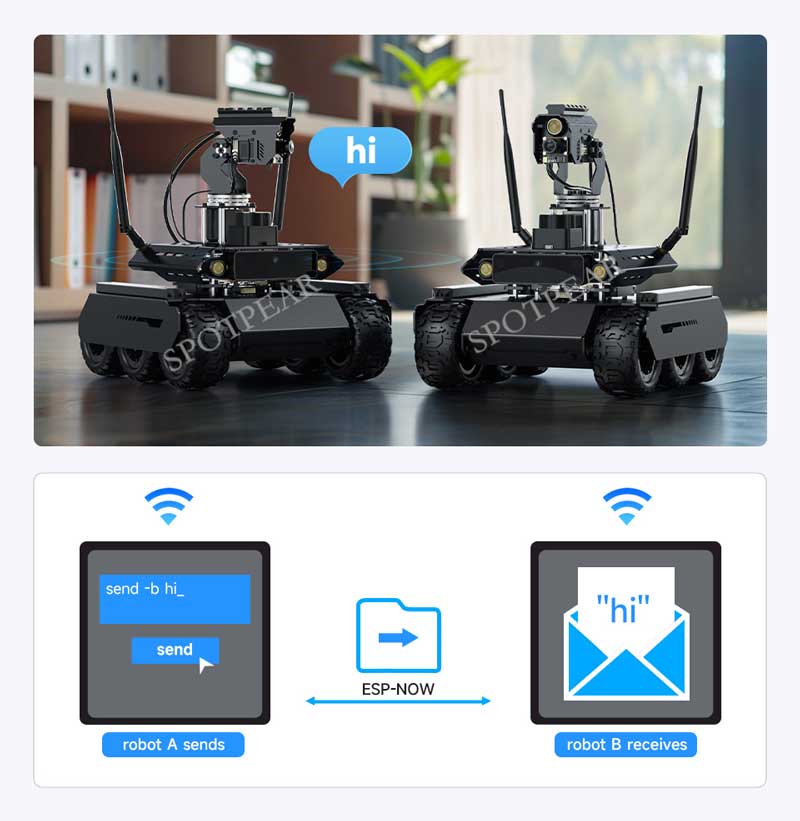

ESP-NOW Wireless Communication

Between Robots

Based On ESP-NOW Communication Protocol, Multiple Robots Can Communicate With Each Other Without IP Or MAC Address, Achieving Multi-Device Collaboration With 100-Microsecond Low-Latency Communication

Gamepad Control For Better Operation Experience

Comes With A Wireless Gamepad, Making Robot Control More Flexible. You Can Connect The USB Receiver To Your PC And Control The Robot Remotely Via The Internet. Provides Open Source Demo For Customizing Your Own Interaction Method

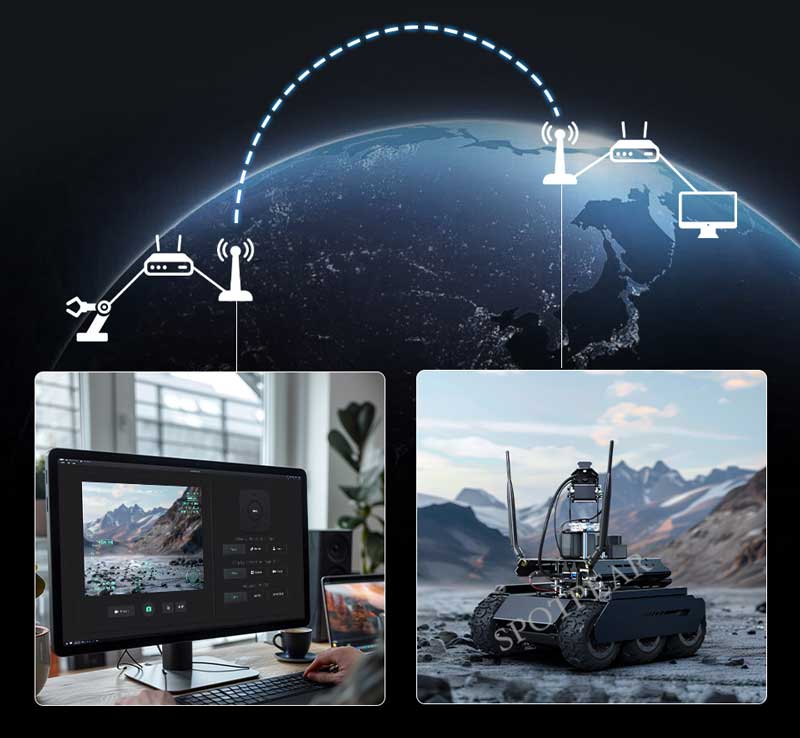

- Our web application demos are based on WebRTC for real-time video transmission.

- WebRTC (Web Real-Time Communications) is a technology that enables web applications and sites to establish peer-to-peer connection and capture optionally stream audio and/or video media, as well as to exchange arbitrary data between browsers without requiring an intermediary.

- We provide comprehensive Ngrok tutorials* to help you get started quickly and realize robot control across the internet.

* Provides the usage tutorials of Ngrok only, we do not provide any Ngrok accounts or Servers. You can follow our tutorial to open your own Ngrok service, or choose other tunneling services according to your needs.

* Provides the usage tutorials of Ngrok only, we do not provide any Ngrok accounts or Servers. You can follow our tutorial to open your own Ngrok service, or choose other tunneling services according to your needs.

Supports Installing Smartphone Holder

If You Have A Spare Phone, You Can Install It On The Robot Via Holder As Below, Using The Phone To Create A Hotspot For The Robot And Achieving Remote Control Across The Internet At A Lower Cost

* Comes with a smartphone holder with 1/4″ screw in the package

Cross-Platform Interactive Tutorial

Develop While You LearnSupports Accessing Jupyter Lab Via Devices Such As Mobile Phones And Tablets To Read The Tutorials And Edit The Code On The Web Page, Making Development Easier

Rich Tutorial ResourcesWe Provide Complete Tutorials And Demos To Help Users Get Started Quickly For Learning And Secondary Development

Rich Tutorial ResourcesWe Provide Complete Tutorials And Demos To Help Users Get Started Quickly For Learning And Secondary Development

Open-Source All Demos

Full Dual-Controller Technology Stack

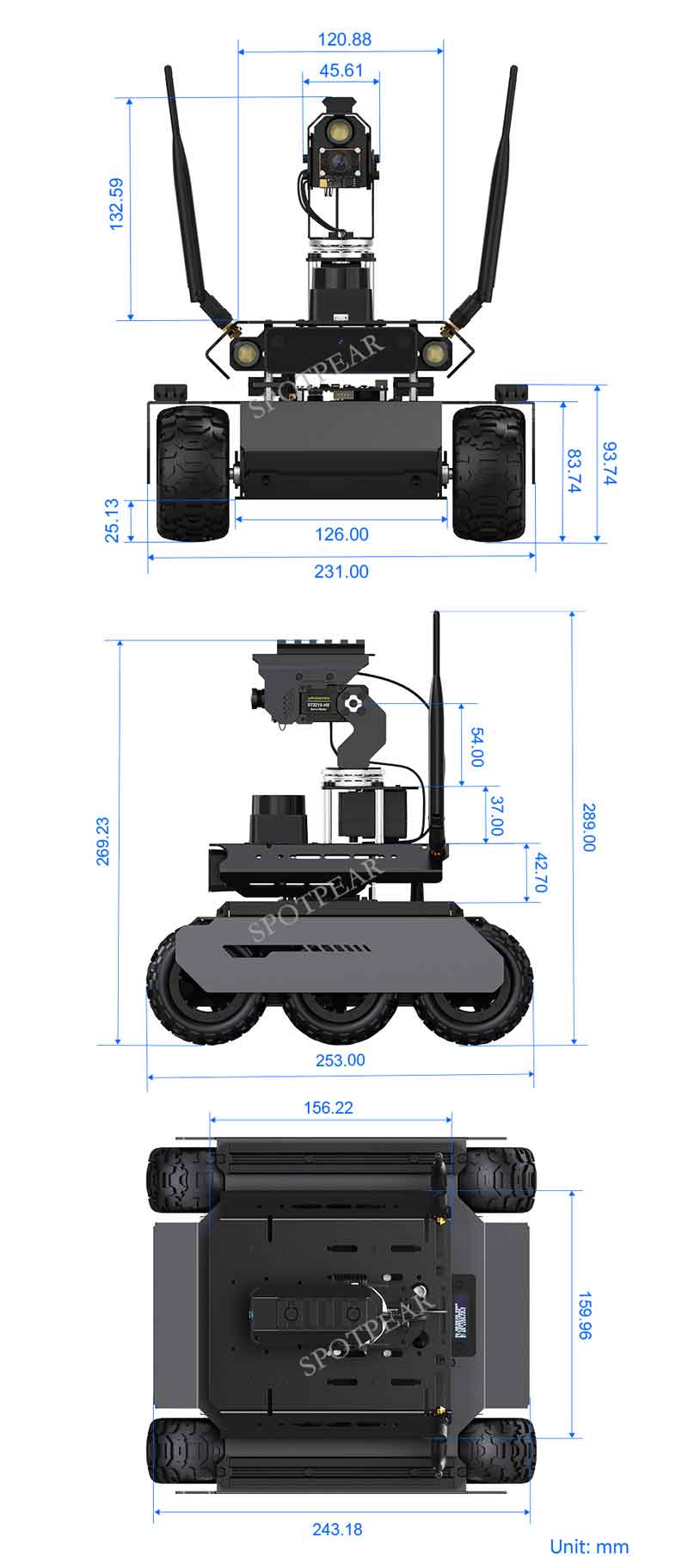

More Design Details Outline Dimensions

Outline Dimensions