In the article, you provide a good overview of the modules themselves and their general programming. But it's completely unclear how the modules communicate with each other and how to program this communication. For example, there's hardware communication between the ASRPRO and the LCD-ESP32, but how do you make the eyes change their expression immediately after recognizing a phrase? How do the eyes from the MCU-ESP32 even change? What source code is currently being loaded into the device: the regular Movecall Moji or a modified one?

- sales/support

Google Chat:---

- sales

+86-0755-88291180

- sales01

sales@spotpear.com

- sales02

dragon_manager@163.com

- support

tech-support@spotpear.com

- CEO-Complaints

zhoujie@spotpear.com

- Only Tech-Support

WhatsApp:13246739196

- Purchase/Shipping/Refund

WhatsApp:13424403025

Examples of module interactions

- Answer time:

His workflow is like this

The ESP32 controller recognizes GPIO levels. Different levels will display different video expressions

The demo we provide for the voice module has an example. Tell you which voices correspond to which levels

You don't need to worry about level output recognition. Just need to modify the voice wake-up word

The level recognition has been confirmed on ESP32

If you need to confirm which level corresponds to which voice and video

You can refer to our demo of voice wake-up words

report

- Answer time:

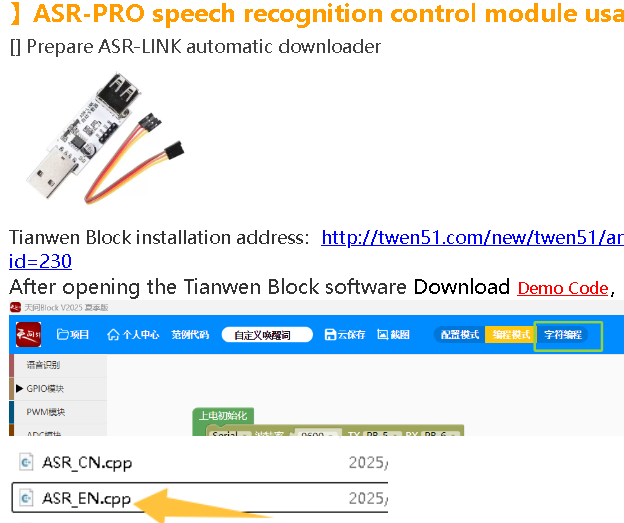

Yes, I already understood from the schematic (иге with difficulty) that it won't be possible to implement the AI-ESP32 eye expression changes. However, the page https://spotpear.com/wiki/ESP32-S3-AI-BOX-deepseek-xiaozhi-doubao-0.71inch-LCD-Electronic-Eye-Toy-Doll-Robot.html contains code for the LCD-ESP, but not for the ASRPRO you're using. Only screenshots of the Tianwen Block control program are available. Could you please add or share this code with me?

report

- Answer time:

report

- Answer time:

Thank you so much for the example and instructions!

I have a small question. In the diagram, the ASRPRO is connected to the AI-ESP32 via PA4. I thought this was controlled via a wake-up word to wake the AI-ESP32. But there are no wake-up words in the code example. I can't figure out if this is how I can wake the AI-ESP32 and remove wakenet from the Xiaozhi code?

report

- Answer time:

updated again,please check

-》】ASR-PRO speech recognition control module usage

report

- Answer time:

It is divided into two parts

The wake-up words such as【nihao! Xiaozhi】and 【Hi! ESP】are controlled through AI-ESP32.

Xiaozhi account backend supports customers to modify wake-up words themselves, refer to the tutorial

If you want to modify the facial expressions of voice controlled eyes, you need to modify the ASR module

report